torch_study_2

"/home/yossef/notes/personal/ml/torch_study/torch_study_2.md"

path: personal/ml/torch_study/torch_study_2.md

- **fileName**: torch_study_2

- **Created on**: 2026-04-02 19:00:36

import torch

import torch.nn as nn

import torch.optim as optim

import sys; sys.path.append('assets/scripts'); import helper_utils

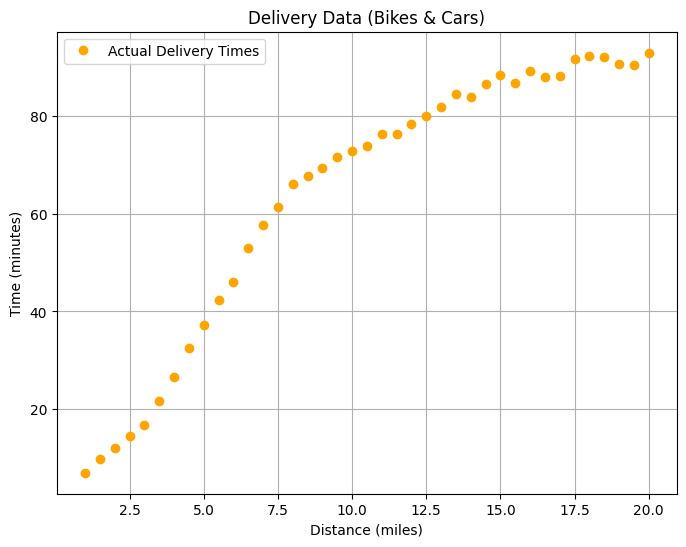

so now gone try too train the new the model that gone understand the non linear data relation

so gone using relu activation function for adding non-linearity for

the model and train on the bike and cars data for distances, and

time

## creating the data

## THIS IS FORMATED DATA ON REALL WORLD NO WAY THIS GONE hAPPEND

distances = torch.tensor([

[1.0], [1.5], [2.0], [2.5], [3.0], [3.5], [4.0], [4.5], [5.0], [5.5],

[6.0], [6.5], [7.0], [7.5], [8.0], [8.5], [9.0], [9.5], [10.0], [10.5],

[11.0], [11.5], [12.0], [12.5], [13.0], [13.5], [14.0], [14.5], [15.0], [15.5],

[16.0], [16.5], [17.0], [17.5], [18.0], [18.5], [19.0], [19.5], [20.0]

], dtype=torch.float)

times = torch.tensor([

[6.96], [9.67], [12.11], [14.56], [16.77], [21.7], [26.52], [32.47], [37.15], [42.35],

[46.1], [52.98], [57.76], [61.29], [66.15], [67.63], [69.45], [71.57], [72.8], [73.88],

[76.34], [76.38], [78.34], [80.07], [81.86], [84.45], [83.98], [86.55], [88.33], [86.83],

[89.24], [88.11], [88.16], [91.77], [92.27], [92.13], [90.73], [90.39], [92.98]

], dtype=torch.float)

helper_utils.plot_data(distances, times)

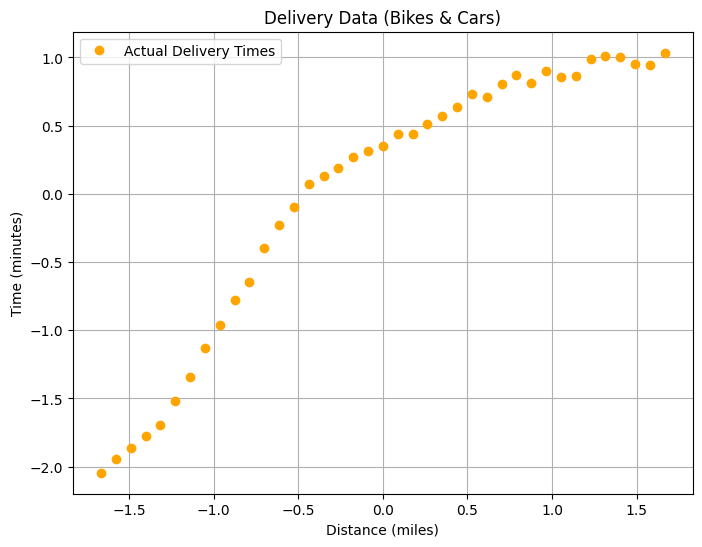

so what is the normalization (z-scores, standardization) process

-

the algorhtim that is train the model using making a little

mistakes that making making the tarin process a little longer on time

so u needed too fix this so what is the mistake first : ( the

algorhtim is based zero so what this mean => it's looking at the data

that we have and check for what is more based on zero so on our case

there is distances and times distances range is (0, 5000), and time

(0, 200) so for this it's gone think that the distances is more

important on decision making and gone be a little bias on the start so

it's gone on the end learn the right way but gone be on more time and

more resources beacuse the bias on the start) -

too solve this soo u making operation name normlization which is

meaning making the values on small range so for example distances

range between (1, 2) time range (-1 , 1) so the value is too small so

the model not gone making any bias too any features at all

so the bias is when it's gone change the value for weight and bias for the model

distances_mean = distances.mean()

distances_std = distances.std()

print(f"the mean for distances: {distances_mean}")

print(f"the std for distances: {distances_std}")

the mean for distances: 10.5 the std for distances: 5.7008771896362305

times_mean = times.mean()

times_std = times.std()

print(f"the mean for times: {times_mean}")

print(f"the std for times: {times_std}")

the mean for times: 64.07128143310547 the std for times:

27.938106536865234

# using z-score method

distances_norm = (distances - distances_mean) / distances_std

times_norm = (times - times_mean) / times_std

print(f"the distances norm {distances_norm[:5]}")

print(f"the times norm {times_norm[:5]}")

the distances norm tensor([[-1.6664], [-1.5787], [-1.4910], [-1.4033],

[-1.3156]]) the times norm tensor([[-2.0442], [-1.9472], [-1.8599],

[-1.7722], [-1.6931]])

helper_utils.plot_data(distances_norm, times_norm)

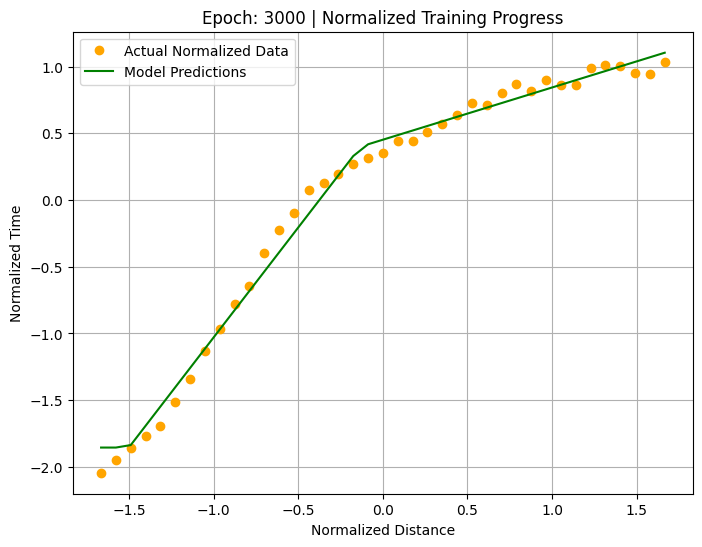

building the model

- making 3 hidden layer (1,3) 2. apply the activation function for

adding nonlinearity for all the hiddden layers 3 that important step

that allow the model learn curves lines not stright line 3. the output

layer (3, 1)

hint : the output layer how indentiy it's numbers input and output (3, 1) 3 input values, one output value

soo too know how too setup this layer easyyyyyyyyyyyyyyyyy check the before hidden layer

# stop the random start for model (weight and bias)

torch.manual_seed(42)

model = nn.Sequential(

nn.Linear(1, 3),

nn.ReLU(),

nn.Linear(3,1)

)

##### trainning making the loss function and optim

loss_fn = nn.MSELoss()

optimizer = optim.SGD(model.parameters(), lr=0.01)

# Training loop

for epoch in range(3000):

# Reset the optimizer's gradients

optimizer.zero_grad()

# Make predictions (forward pass)

outputs = model(distances_norm)

# Calculate the loss

loss = loss_fn(outputs, times_norm)

# Calculate adjustments (backward pass)

loss.backward()

# Update the model's parameters

optimizer.step()

# Create a live plot every 50 epochs

if (epoch + 1) % 50 == 0:

helper_utils.plot_training_progress(

epoch=epoch,

loss=loss,

model=model,

distances_norm=distances_norm,

times_norm=times_norm

)

print("\nTraining Complete.")

print(f"\nFinal Loss: {loss.item()}")

Training Complete.

Final Loss: 0.00779527286067605

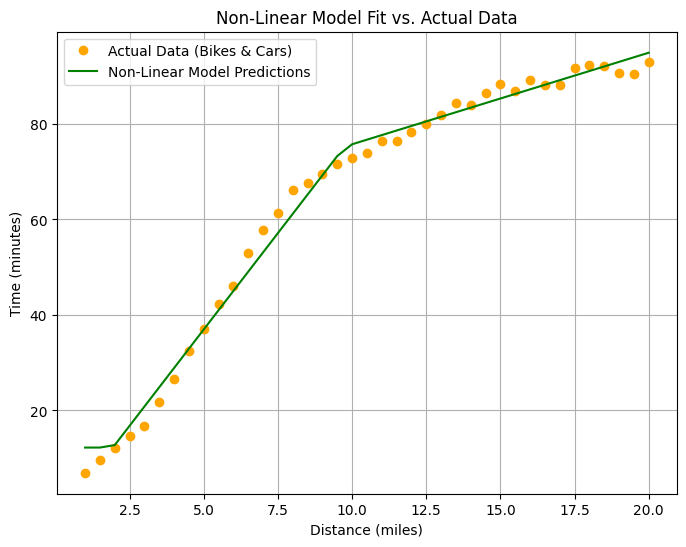

helper_utils.plot_final_fit(model, distances, times, distances_norm, times_std, times_mean)

so now we making the first model that handle non-linearity data and plot the views for this datas

what about the predicetion

want too test this model

- we gone have the data for example new_distance = 20

hint we must doing normlization process again

why this ??? because we train the model on norimllization data

so now we gone norimalize the data and then passing too the model and

after that (model preduce => output data) gone be norimlized data so u

gone return back too normal format that is distance = 20

using the same normlization method that weeee used on the train

## making the process for preduction is less using resources and time

## because on train the algothim taking too much time and resources so

## we tell him this prediction not train so no needed for all the process

## that not gone be needed

with torch.no_grad():

# creating the tensor for prediction distance

pred_distance = torch.tensor([5.1], dtype=torch.float)

# normlization process

pred_distance_norm = (pred_distance - distances_mean) / distances_std

# denormlization process

# (pred_distance - distances_mean) = (pred_distance_norm / distances_std)

# pred_distance = (pred_distance_norm / distances_std) + distances_mean

# get the value from the model (and return time)

new_output = model(pred_distance_norm)

new_output_real = (new_output * times_std) + times_mean

# getting the acctually data from the normlization data

print(f"the distance prediction : {pred_distance}, distance norm: {pred_distance_norm}")

print(f"the time prediction : {new_output_real}, time norm: {new_output}")

the distance prediction : tensor([5.1000]), distance norm:

tensor([-0.9472]) the time prediction : tensor([37.7663]), time norm:

tensor([-0.9415])

# --- Decision Making Logic ---

print(f"Prediction for a {pred_distance}-mile delivery: {new_output_real.item():.1f} minutes")

# First, check if the delivery is possible within the 45-minute timeframe

if new_output_real.item() > 45:

print("\nDecision: Do NOT promise the delivery in under 45 minutes.")

else:

# If it is possible, then determine the vehicle based on the distance

if new_output_real <= 3:

print(f"\nDecision: Yes, delivery is possible. Since the distance is {pred_distance} miles (<= 3 miles), use a bike.")

else:

print(f"\nDecision: Yes, delivery is possible. Since the distance is {pred_distance} miles (> 3 miles), use a car.")

Prediction for a tensor([5.1000])-mile delivery: 37.8 minutes

Decision: Yes, delivery is possible. Since the distance is

tensor([5.1000]) miles (> 3 miles), use a car.

before:torch_study_1

continue:torch_study_3